By Kilimokwanza.org Reporter

Artificial intelligence (AI) has a rich history that dates back several decades. The term “artificial intelligence” was coined in 1956 by John McCarthy, Marvin Minsky, Nathaniel Rochester, and Claude Shannon during the Dartmouth Conference. However, the field’s roots stretch further back, encompassing a range of developments in mathematics, engineering, and computing.

The Early Days and Machine Learning

In the early days, what we now call AI was often referred to as “machine learning” (ML). This term highlights the core concept of machines being able to learn from data and improve their performance over time without being explicitly programmed for every specific task.

Key Milestones in Early AI and Machine Learning:

- 1940s-1950s: Theoretical Foundations

- Alan Turing: In 1950, Turing published “Computing Machinery and Intelligence,” which proposed the Turing Test as a measure of machine intelligence. His work laid the theoretical groundwork for the development of intelligent machines.

- Claude Shannon: Known as the father of information theory, Shannon’s work on digital circuit design theory and data compression algorithms also influenced the development of AI.

- 1950s: Birth of AI and ML

- Dartmouth Conference (1956): This conference marked the official birth of AI as a field of study. Researchers like John McCarthy, Marvin Minsky, and others aimed to explore the possibility of creating machines that could simulate human intelligence.

- Arthur Samuel: Often credited with coining the term “machine learning,” Samuel developed a self-learning checkers program in the 1950s. His work demonstrated that machines could learn and improve from experience.

- 1960s-1970s: Early AI Systems and Algorithms

- Perceptrons: Frank Rosenblatt developed the perceptron, an early type of neural network, in the 1960s. While limited by its inability to solve non-linear problems, it was a significant step toward modern neural networks.

- Symbolic AI: This approach, also known as “Good Old-Fashioned AI” (GOFAI), focused on using logical rules and symbols to represent knowledge. Early AI systems like ELIZA (a simple chatbot) and SHRDLU (a natural language understanding program) were developed during this period.

- 1980s: The Rise of Expert Systems

- Expert Systems: These AI programs used rule-based systems to simulate the decision-making abilities of human experts. Systems like MYCIN, which assisted doctors in diagnosing bacterial infections, were notable examples.

- Backpropagation: In the mid-1980s, Geoffrey Hinton and others rediscovered the backpropagation algorithm for training neural networks, reinvigorating interest in neural networks and machine learning.

- 1990s: Machine Learning Becomes Mainstream

- Support Vector Machines (SVMs): Introduced in the 1990s, SVMs became popular for their effectiveness in classification tasks.

- Bayesian Networks: These probabilistic models, used for reasoning under uncertainty, gained prominence for their applications in various fields, including medical diagnosis and risk assessment.

- 2000s-Present: The Era of Big Data and Deep Learning

- Big Data: The explosion of data generated by the internet and digital devices provided vast information for training machine learning models.

- Deep Learning: This subset of machine learning, involving multi-layered neural networks, achieved significant breakthroughs in tasks like image and speech recognition. Landmark achievements include the development of AlexNet, which won the ImageNet competition in 2012, and the rise of deep learning frameworks like TensorFlow and PyTorch.

- AI in Everyday Life: AI technologies have become ubiquitous, powering applications like virtual assistants (Siri, Alexa), recommendation systems (Netflix, Amazon), and autonomous vehicles.

Modern AI and Machine Learning

Today, AI and machine learning are integral to many aspects of technology and society. The field continues to evolve rapidly, driven by advancements in algorithms, computing power, and the availability of large datasets. Key areas of focus include:

- Natural Language Processing (NLP): Developing AI systems that can understand, interpret, and generate human language.

- Computer Vision: Enabling machines to interpret and process visual information from the world.

- Reinforcement Learning: Training models to make a sequence of decisions by rewarding desired behaviors.

- Ethics and Fairness: Addressing the ethical implications of AI, including bias, transparency, and accountability.

While AI has come a long way from its early days as “machine learning,” the foundational concepts remain central to the field. The journey from simple rule-based systems to sophisticated deep learning models illustrates the incredible progress made and the vast potential for future advancements.

Deep learning is a subset of machine learning that focuses on using neural networks with many layers (hence “deep”) to model and understand complex patterns in data. Here’s an overview of how deep learning works:

Key Concepts in Deep Learning

- Neural Networks: The building blocks of deep learning models, inspired by the structure and function of the human brain. Neural networks consist of layers of nodes (neurons) that process input data and learn from it.

- Layers: Deep neural networks have multiple layers, typically including:

- Input Layer: The first layer that receives the raw input data.

- Hidden Layers: Intermediate layers that transform the input into more abstract representations. The depth (number of hidden layers) distinguishes deep learning from simpler neural networks.

- Output Layer: The final layer that produces the model’s prediction or classification.

- Activation Functions: Functions applied to each neuron’s output to introduce non-linearity, enabling the network to learn complex patterns. Common activation functions include ReLU (Rectified Linear Unit), sigmoid, and tanh.

- Weights and Biases: Parameters within the network that are adjusted during training to minimize the error in predictions. Weights determine the strength of connections between neurons, while biases are added to the neuron inputs.

- Forward Propagation: The process of passing input data through the network layers to obtain an output. Each neuron calculates a weighted sum of its inputs, applies an activation function, and passes the result to the next layer.

- Loss Function: A function that measures the difference between the predicted output and the actual target. Common loss functions include mean squared error (MSE) for regression tasks and cross-entropy loss for classification tasks.

- Backpropagation: The process of adjusting weights and biases based on the error calculated by the loss function. Backpropagation uses the gradient descent algorithm to minimize the loss function by iteratively updating the parameters in the direction that reduces the error.

- Optimization Algorithms: Techniques used to update the weights and biases during training. Gradient descent and its variants (e.g., stochastic gradient descent, Adam) are commonly used optimization algorithms.

Training a Deep Learning Model

- Initialization: The weights and biases are initialized, typically with small random values.

- Forward Pass: Input data is passed through the network, layer by layer, to generate an output.

- Loss Calculation: The loss function computes the error between the predicted output and the actual target.

- Backward Pass (Backpropagation): The gradients of the loss function with respect to each weight and bias are calculated using the chain rule of calculus. These gradients indicate how to adjust the parameters to reduce the error.

- Parameter Update: The optimization algorithm updates the weights and biases based on the gradients, moving the parameters in the direction that decreases the loss.

- Iteration: Steps 2-5 are repeated for many iterations (epochs) until the model’s performance converges or improves satisfactorily.

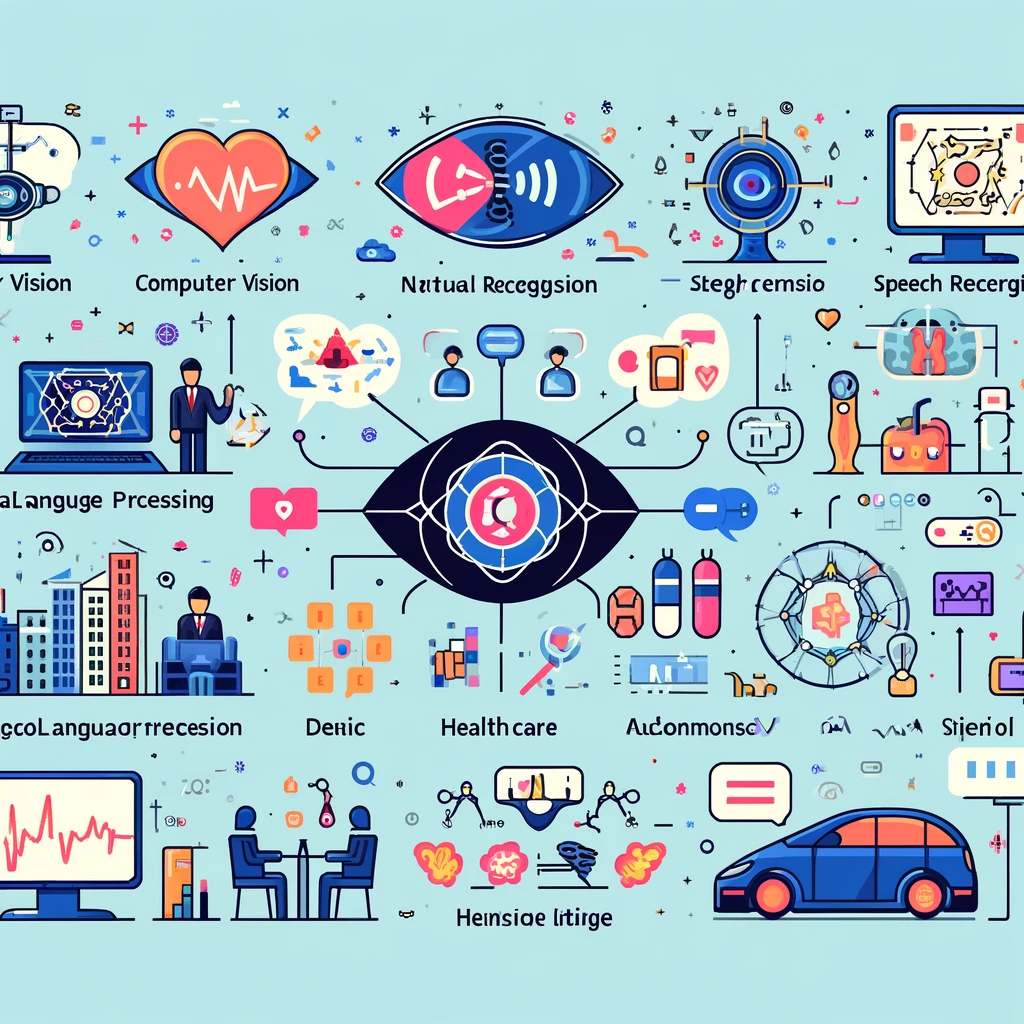

Applications of Deep Learning

Deep learning is applied in various fields, including:

- Computer Vision: Image and video recognition, object detection, and image generation (e.g., convolutional neural networks, or CNNs).

- Natural Language Processing (NLP): Language translation, sentiment analysis, and text generation (e.g., recurrent neural networks, or RNNs, and transformers).

- Speech Recognition: Transcribing spoken language into text.

- Healthcare: Medical image analysis, disease prediction, and drug discovery.

- Autonomous Vehicles: Perception, decision-making, and control systems for self-driving cars.

Summary

Deep learning works by utilizing neural networks with many layers to model complex relationships in data. These networks learn to make accurate predictions or classifications through forward propagation, loss calculation, backpropagation, and parameter updates. The power of deep learning lies in its ability to automatically learn features and representations from raw data, making it highly effective for tasks involving large amounts of data and complex patterns.